Introduction

This page presents how LTSMin do cope efficiently with the LTLFireability examination face to the other participating tools. In this page, we consider «Surprise» models.

The next sections will show chart comparing performances in termsof both memory and execution time.The x-axis corresponds to the challenging tool where the y-axes represents LTSMin' performances. Thus, points below the diagonal of a chart denote comparisons favorables to the tool whileothers corresponds to situations where the challenging tool performs better.

You might also find plots out of the range that denote the case were at least one tool could not answer appropriately (error, time-out, could not compute or did not competed).

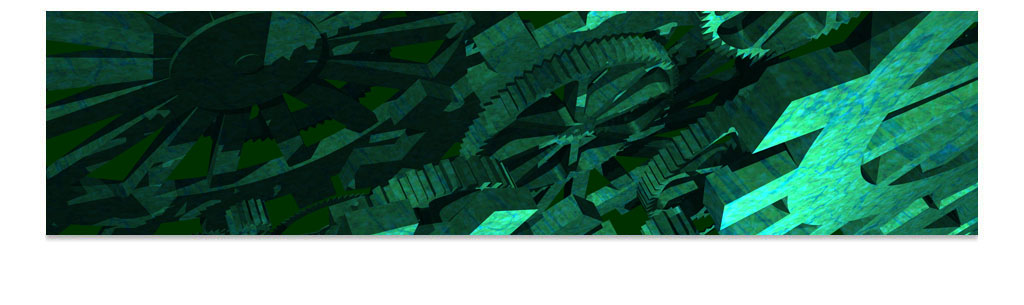

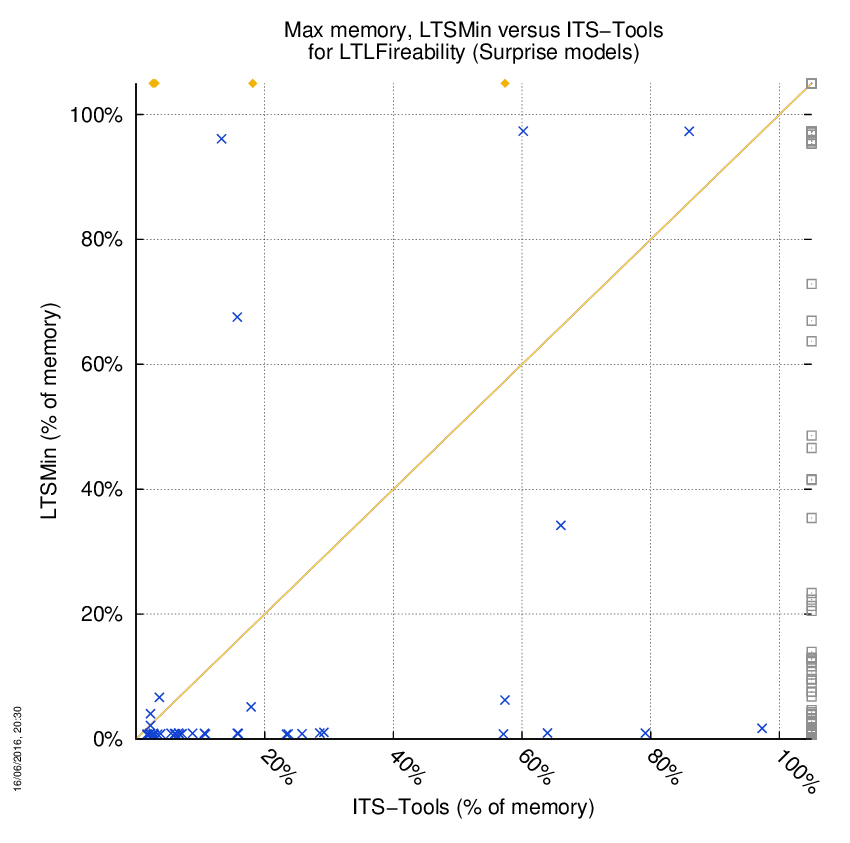

LTSMin versus ITS-Tools

Some statistics are displayed below, based on 278 runs (139 for LTSMin and 139 for ITS-Tools, so there are 139 plots on each of the two charts). Each execution was allowed 1 hour and 16 GByte of memory. Then performance charts comparing LTSMin to ITS-Tools are shown (you may click on one graph to enlarge it).

| Statistics on the execution | ||||||

| LTSMin | ITS-Tools | Both tools | LTSMin | ITS-Tools | ||

| Computed OK | 86 | 4 | 44 | Smallest Memory Footprint | ||

| Do not compete | 9 | 0 | 0 | Times tool wins | 124 | 10 |

| Error detected | 0 | 0 | 0 | Shortest Execution Time | ||

| Cannot Compute + Time-out | 0 | 91 | 0 | Times tool wins | 126 | 8 |

On the chart below, ![]() denote cases where

the two tools did computed a result without error,

denote cases where

the two tools did computed a result without error, ![]() denote the cases where at least one tool did not competed,

denote the cases where at least one tool did not competed,

![]() denote the cases where at least one

tool computed a bad value and

denote the cases where at least one

tool computed a bad value and ![]() denote the cases where at least one tool stated it could not compute a result or timed-out.

denote the cases where at least one tool stated it could not compute a result or timed-out.

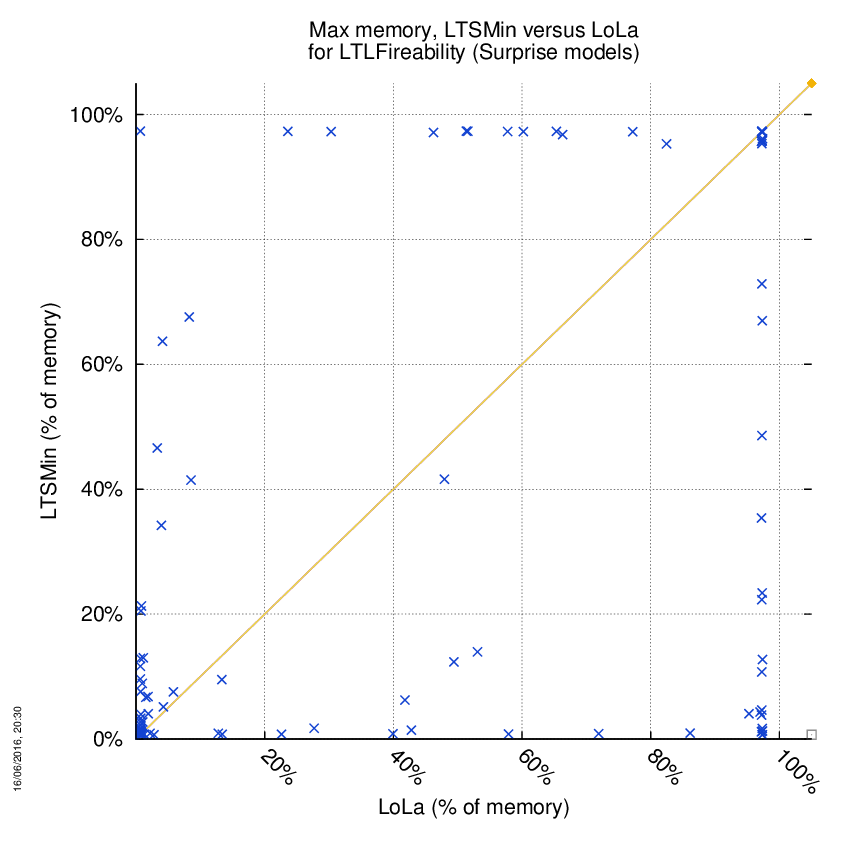

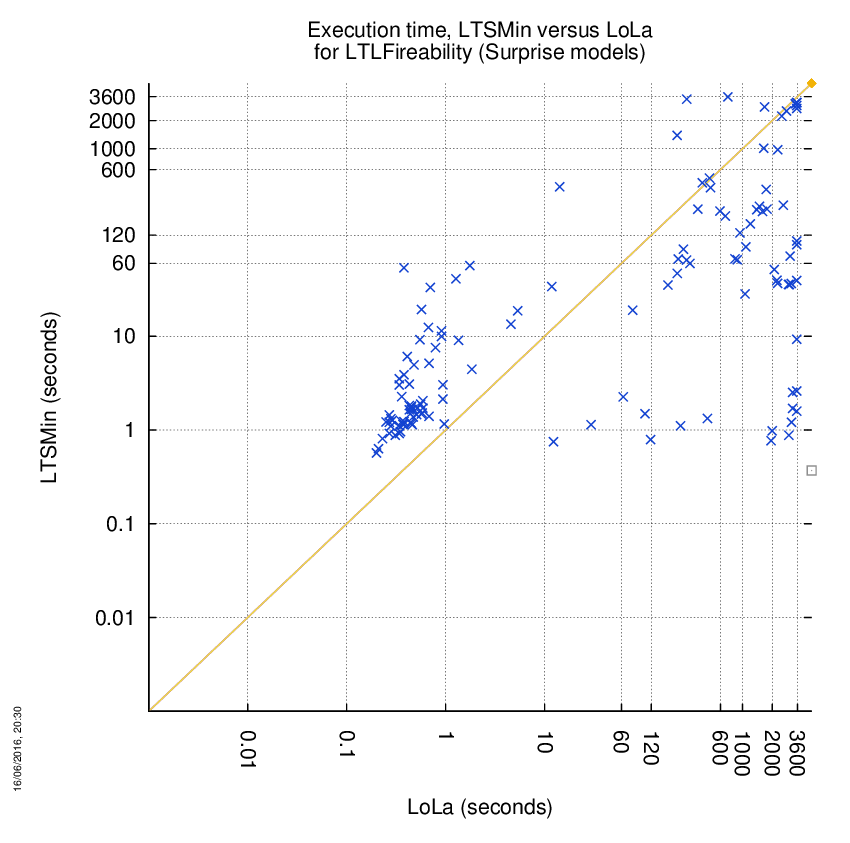

LTSMin versus LoLa

Some statistics are displayed below, based on 278 runs (139 for LTSMin and 139 for LoLa, so there are 139 plots on each of the two charts). Each execution was allowed 1 hour and 16 GByte of memory. Then performance charts comparing LTSMin to LoLa are shown (you may click on one graph to enlarge it).

| Statistics on the execution | ||||||

| LTSMin | LoLa | Both tools | LTSMin | LoLa | ||

| Computed OK | 1 | 0 | 129 | Smallest Memory Footprint | ||

| Do not compete | 0 | 0 | 9 | Times tool wins | 47 | 83 |

| Error detected | 0 | 0 | 0 | Shortest Execution Time | ||

| Cannot Compute + Time-out | 0 | 1 | 0 | Times tool wins | 59 | 71 |

On the chart below, ![]() denote cases where

the two tools did computed a result without error,

denote cases where

the two tools did computed a result without error, ![]() denote the cases where at least one tool did not competed,

denote the cases where at least one tool did not competed,

![]() denote the cases where at least one

tool computed a bad value and

denote the cases where at least one

tool computed a bad value and ![]() denote the cases where at least one tool stated it could not compute a result or timed-out.

denote the cases where at least one tool stated it could not compute a result or timed-out.

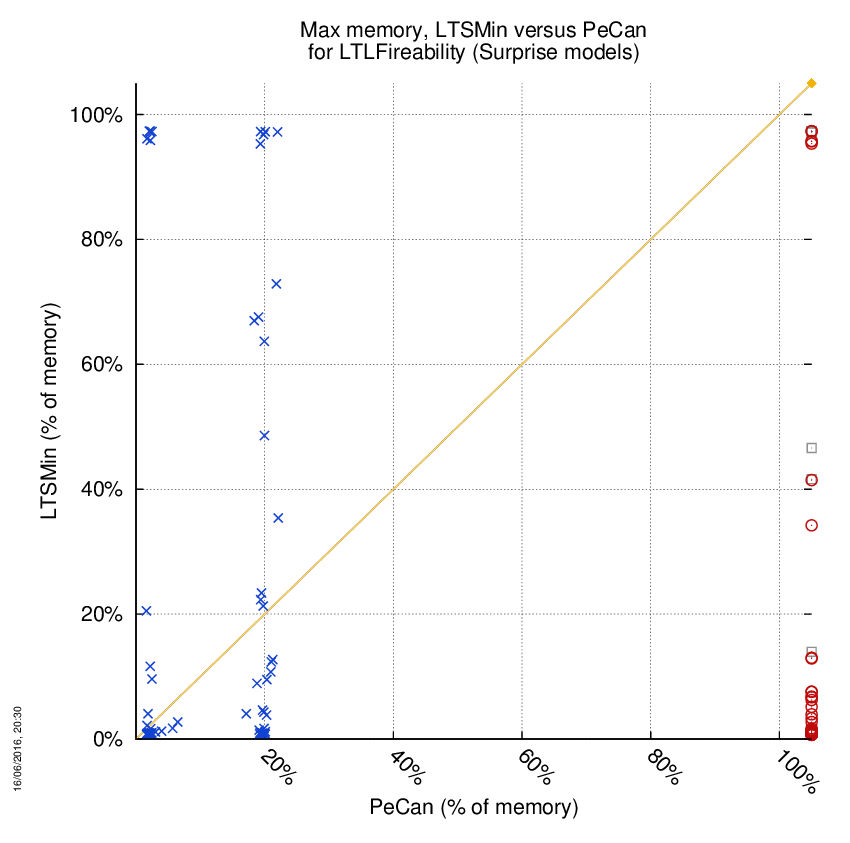

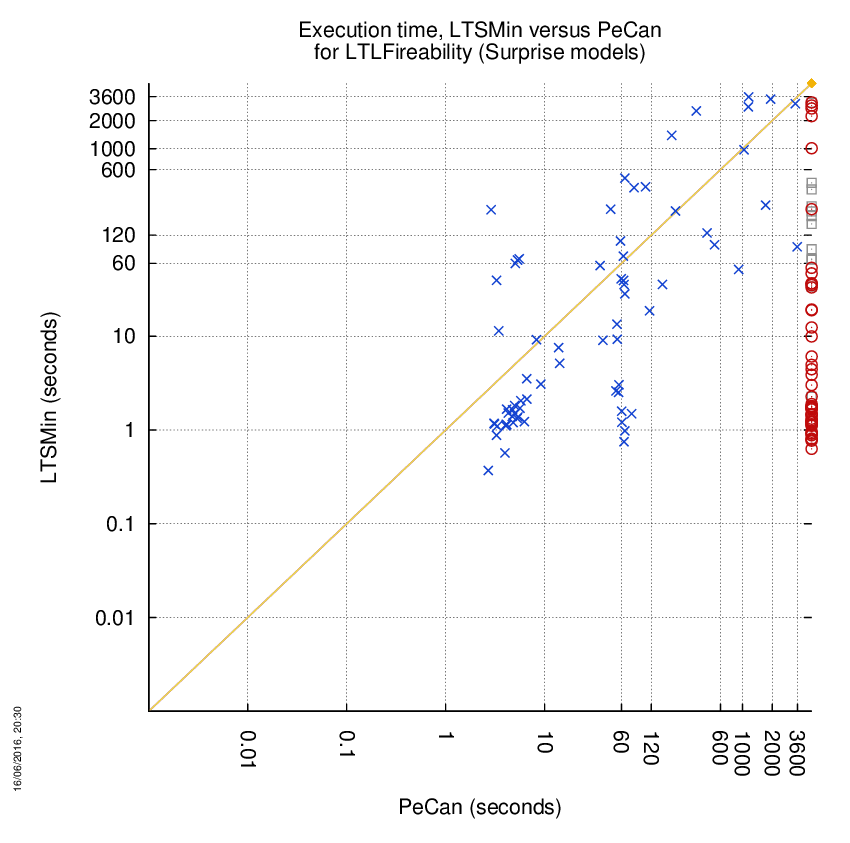

LTSMin versus PeCan

Some statistics are displayed below, based on 278 runs (139 for LTSMin and 139 for PeCan, so there are 139 plots on each of the two charts). Each execution was allowed 1 hour and 16 GByte of memory. Then performance charts comparing LTSMin to PeCan are shown (you may click on one graph to enlarge it).

| Statistics on the execution | ||||||

| LTSMin | PeCan | Both tools | LTSMin | PeCan | ||

| Computed OK | 60 | 0 | 70 | Smallest Memory Footprint | ||

| Do not compete | 0 | 0 | 9 | Times tool wins | 106 | 24 |

| Error detected | 0 | 48 | 0 | Shortest Execution Time | ||

| Cannot Compute + Time-out | 0 | 12 | 0 | Times tool wins | 110 | 20 |

On the chart below, ![]() denote cases where

the two tools did computed a result without error,

denote cases where

the two tools did computed a result without error, ![]() denote the cases where at least one tool did not competed,

denote the cases where at least one tool did not competed,

![]() denote the cases where at least one

tool computed a bad value and

denote the cases where at least one

tool computed a bad value and ![]() denote the cases where at least one tool stated it could not compute a result or timed-out.

denote the cases where at least one tool stated it could not compute a result or timed-out.